-

HIGH VAULTAGE Fallout TV show SPOILERCAST

Amazon’s TV juggernaut isn't afraid to play around in Bethesda's universe, but do we want it to?

-

WTB BOOST World of Warcraft: The War Within preview - delving into an exciting new saga

The first of three new expansions, The War Within makes a great first impression.

-

HENRY'S BACK Kingdom Come: Deliverance 2 continues the realism-obsessed RPG series - and is set to release later this year

Warhorse Studios returns to Bohemia with a sequel to its realism-focused action RPG.

-

HALO THERE Sony is reportedly looking at making another acquisition, and this time it involves Halo

Ah yes, thinking about buying big things, we definitely don't see much of that from companies nowadays.

-

NUMBER OF THE BUGS-T Helldivers 2 players have managed to kill two billion Terminids so quickly that people are, er, actually trying to do maths

Hang on, let me get my bug-squishing abacus out and ring the guy who used to give me detention.

-

BLAST FROM THE PAST "It feels right" - Fallout's original creator's now properly reviewed Amazon's TV show, and he still likes it

He also touched on the whole Shady Sands thing.

-

You'll probably have to do a bit of unavoidable waiting for updates, but some new tags should make things a bit less confusing.

Psst! Explore our new "For you" section and get personalised recommendations about what to read.

-

KICK WARRIOR You can now play World of Warcraft in VR - the perfect excuse for your awful DPS

Yeah raid leaders can check your parses, but they can't check to see if you've got a $500 VR headset on can they?

-

IT'S RAD The original Fallout games show their age - but newer fans should still give them a shot

Yes, they may look a bit old and out-dated, but the foundations of the Fallout universe are perhaps more relevant than ever right now.

-

DOMAIN EXPANSION Jujutsu Kaisen is officially the world's most popular anime, and I can't think of a series that deserves it more

Yes, I know you think your favourite series should be the most popular, but it isn't so pipe down.

-

How you sign in to this site is changing slightly

ReedPop ID is now Gamer Network ID.

-

COUNT YOUR KAIJU Want to keep that Godzilla train rolling? This season's latest kaiju anime is the one for you

Don't worry, Godzilla Minus One and Godzilla x Kong: The New Empire fans, there's more kaiju ready and waiting for you.

-

SPACE FOR ALL Apple is staying in the space business, as For All Mankind gets a season 5 renewal plus a spinoff

It really likes producing these shows.

-

NO F*CKS GIVEN Brian Cox comes out with guns blazing and tears into Joaquin Phoenix's "truly terrible" Napoleon performance

Well, at least he's being sincere.

-

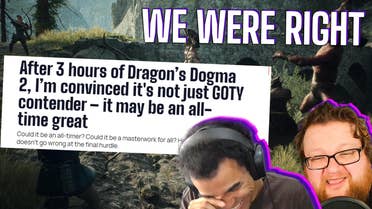

TWISTED FIGHTSTARTER Doom-inspired Dragon's Dogma 2 mod lets you engineer inter-enemy fights by controlling exactly how angry they can get

As Harry Hill would say, there's only one real way to find out whether a Cyclops is better than a wolf.

-

SECOND ATTEMPT The American Oldboy remake turned out horrible, so they're making a TV series next

At least the bar is underground at this point.

-

Best Xbox deals for April 2024

The best deals on Xbox Series X/S, Xbox games, Game Pass, accessories and more.

-

Best PS4 game deals in April 2024

Continue building your PlayStation 4 game library for even cheaper.

-

PS5 stock: latest updates on where to buy the PlayStation 5

Here's all the retailers that currently have Sony's next-gen console in stock.

-

INEVITABLE, REALLY Somehow, Hades 2's new character art has left fans even hornier than the first game, and you can't really blame them

Yup, all of those awoooga noises Tom and Jerry make when they fall in love.

-

TARANTI-NOPE Quentin Tarantino walks back final film plans that would've included a Once Upon a Time in Hollywood character

No, we don't think he's returning to his Star Trek idea.

-

TIME IS RELATIVE Todd Howard steps in to double down that yes, Fallout: New Vegas is canon to Amazon's Fallout

No deviation from the timeline.

-

_ddwYK80.png?width=271&height=153&fit=crop&quality=70&format=jpg&auto=webp)

CRAB RAVE World of Warcraft is fixing a major problem with the help of... Crabs?

Thanks to a new arachnophobia option, crabs are taking over Azeroth and beyond.

-

SECRETS REVEALED As World of Warcraft thrives in its experimental phase - Season of Discovery is performing well above expectations

It looks as though Blizzard is still discovering just how popular its classic content can get.

-

GAGA FOR GOGGINS This Fallout 4 mod slaps Walton Goggins' beautiful pre-war face from Amazon's Fallout all over The Commonwealth

He's Cooper Howard, and now he's looming over you as you kill feral ghouls or help a settlement.

-

REAL ONES ONLY Sonic 3 is for "all the real diehard fans" says Idris Elba, and I desperately hope he means there'll be Chao

And maybe some other familiar faces, too.

-

DESTINY'S CROSSROADS Final Fantasy 7 Rebirth's ending was never going to please everyone, and that's fine

Sometimes some things aren't meant for everyone.

-

THE PCS5 IS HERE Ghost of Tsushima Director's Cut can turn your PC into a PS5, well, sort of

I personally can't wait until all the platforms inevitably coalesce into the PlaystatioxboswitchC.

-

OLD-FASHIONED VALUES Want to watch Family Guy forever? Seth MacFarlane sure seems happy to let you do so

"At this point, I don’t see a good reason to stop."

-

A ‘MAGNUM’ OPUS Children of the Sun review - A moody, emotive sniper-puzzle shooter dripping with style

Children of the Sun is a short but special game that is definitely bang for your buck.

-

SOUP SANDWICH Rise of the Ronin review - Team Ninja without the bite, or the Nioh heights

Rise of the Ronin is undeniably an evolution of everything Team Ninja has made before, it just picked the wrong path to evolve.

-

PEACHY KEEN Princess Peach: Showtime review – Nintendo's leading lady is anything but asleep at the Switch

Princess Peach proves that she's more than just a damsel that needs rescuing from a castle – and she turns in a marvelous performance whilst doing so.

-

.jpg?width=271&height=153&fit=crop&quality=70&format=jpg&auto=webp)

CAVEAT-EMPTY As Star Wars Outlaws locks missions behind $100 paywall, No Rest for the Wicked isn't afraid to buck the trend

No Rest for the Wicked looks to be a refreshing return to a time when games were content to sell you on an experience and leave it at that.

-

BALDUR'S WAIT Don't worry, the next Baldur's Gate shouldn't be over 20 years away, even if it won't come from Larian

It also sounds like Wizards of the Coast would at least consider bringing back some beloved Baldur's Gate 3 characters.

-

RAINBOW POWER Listen to Jack Black's dulcet tones as he seemingly confirms who he's playing in the Minecraft movie

It's exactly who you think it is.

-

MONSTER LOSS PlayStation Plus is about to suffer a big Final Fantasy exodus, but that pales in comparison to the real tragedy

Sure, we all love a good JRPG, but the monster trucks driving off into the sunset is far, far worse.

-

HOW DO YOU DO Former monster and relatable teen Steve Buscemi reportedly cast in Wednesday season 2

The actor knows a thing or two about scary creatures and being in highschool.

-

Deals Want to watch Fallout free on Amazon Prime? Non-members are in luck

A free 30-day Amazon Prime trial? Sign me the hell up!

-

About 5% of the company's workforce is reportedly in line to be let go by the end of this year.

-

The film is yet to receive an international release date.

-

He did also scribble down which developer was working on "seducing Benny", so that's cool.

-

TUNE IN Watch today's Nintendo Indie World Direct right here

But will Hollow Knight: Silksong finally turn up?

-

KICK WARRIOR You can now play World of Warcraft in VR - the perfect excuse for your awful DPS

Yeah raid leaders can check your parses, but they can't check to see if you've got a $500 VR headset on can they?

-

HIGH VAULTAGE Fallout TV show spoilercast: what does it mean for Fallout 5?

Amazon’s TV juggernaut isn't afraid to play around in Bethesda's universe, but do we want it to?

-

VIDEO Dragon's Dogma 2 is Gen-Z Morrowind and I love it

A barnstorming AAA fantasy adventure that pointedly refuses to hold the player's hand? More of that, please.

-

Deals This Turtle Beach headset is great for PC and PS5 and is the cheapest it's ever been

Save 25% on this 2.4Ghz wireless headset with a massive battery life.

-

DEFYING GRAVITY Witch actor is going to lead the upcoming Jurassic World sequel? Seems like a wicked, but possibly popular choice has been made

Just follow the yellow brick road and you'll find your lead.

-

BEEP, BEEP, BEEEEEEP Elden Ring's now been beaten using morse code, and yes, watching it happen will probably make you feel useless at games

A dot and dash dance with death.

-

A NUCLEAR TAKE I don't like the Fallout games, but the show might have made me a convert

The power of television.

-

TROPHY TROUBLE Hopped into Fallout 4 on Xbox after watching the Fallout TV show, but achievements won't unlock? Don't worry, Bethesda's on it

Achievement not unlocked: Return to The Commonwealth.

-

NO PRESSURE, EH? Fallout explained - Amazon's TV show dissected in less time than it takes for the nuke to drop in Fallout 4's intro

Sadly, I'm not quite a post-apocalyptic rap god.

-

FINGERS CROSSED Are we finally going to see Hollow Knight: Silksong at tomorrow’s Indie World Nintendo Direct?

The game was recently rated by the Australian ratings board.

-

PODCAST The best game where your family DITCHES you? The Best Games Ever Podcast episode 95

Blood and water are of equal consistency when irradiated.

-

Podcast Best Games Ever Podcast - Extended Edition Info Page

All the info you need to get your hands on the for-members-only extended edition of VG247's podcast.

-

GROUND NOT BROKEN Good news, Helldivers 2's latest patch ensures the Ground Breaker armour's no longer a victim of accidental false advertising

Given all you go through to earn those samples and requisitions, being able to avoid buyer's remorse is pretty important.

-

BE A PAL Palworld Guide: How to become a Pal Master

Get the most out of the wild islands of Palpagos with our Palworld Guide!

-

The Last of Us 2 safe codes and combinations - all safe locations

All the safe codes and their locations in The Last of Us 2.

-

Naraka Bladepoint Tier List: Best characters for Solos and Trios

These are the best characters in Naraka: Bladepoint as decided by the top pro players!

-

AD BREAK Disney+ is reportedly tuning into the 1930s for its next new feature

TV is back, and worse.

-

EARLY BIRDS Smite 2 shoots to the top of Steam's top sellers entirely through pre-order founder packs

The free-to-play moba has made a big splash on Steam, and it's not even out yet.

-

G-G-G-GHOSTS??? Love it or hate it, Velma season 2 gets its first trailer

It certainly looks like more of the same, at the very least.

-

Next Monday, eager fans will be able to tune in and get an update on the upcoming ATLAS RPG.

-

THE NEED FOR SPEED Sonic 3 reportedly nets the ultimate life form, Keanu Reeves, as Shadow the Hedgehog

This marks the second time The Matrix actor has been in the Sonic films, technically.

-

THE BAD FIGHT? Remember Fallout 3's Three Dog? You might get to hear a "darker" DJ voiced by his actor in future Fallout TV series episodes

Watch out chiiiiilldren, the narrator of the good fight has "something new to unleash", assuming he gets his way.

-

BREAKDOWN In a post-Armored Core 6 world, can Mechabreak smash the mecha curse?

Is there even an appetite for more role-heavy multiplayer games in a world with Overwatch and Valorant?

-

THE GHOUL, SPEAKING Yes, you can call that Vault-Tec number from Amazon's Fallout, but you might not like what you hear

I'd rather text anyway.

-

Deals This full-size mechanical gaming keyboard from Logitech is cheaper than its Black Friday price

A great keyboard for all-around use.

-

He also fancies "David Lynch-style" Disco Elysium. You can certainly sign me up for that.

0